Systems Engineering in the Age of AI

If you’ve spent time in any engineering community this year, you’ve definitely heard the question “Is AI coming for your job?” The answers you’ll usually hear are at two extremes: either AI will replace every software engineer, or nothing meaningful will change. Neither is accurate. Both sides treat software engineering as a single thing, but the impact of AI depends on what kind of engineering you actually do.

That variance is hard to see if you spend most of your time in application-level code. It becomes obvious if you work closer to the infrastructure layer. I’ve watched AI tools transform many work domains while barely touching others. Systems and infrastructure engineering face less near-term displacement from AI than application-level development. But the picture is more nuanced than “systems safe, apps screwed.” The real dividing line is complexity and ambiguity.

Anthropic Economic Index, which analyzed over 500,000 coding interactions on Claude.ai, confirms that 59% of AI coding queries target web and frontend languages. Roles focused on building simple applications and user interfaces may face disruption sooner than those focused on backend work. But AI is also making real, significant advances in operational infrastructure through AIOps and the automation of many aspects of this domain, and we need to be honest about that, too.

Let me walk through the evidence layer by layer.

The numbers look terrifying ... until you look closer

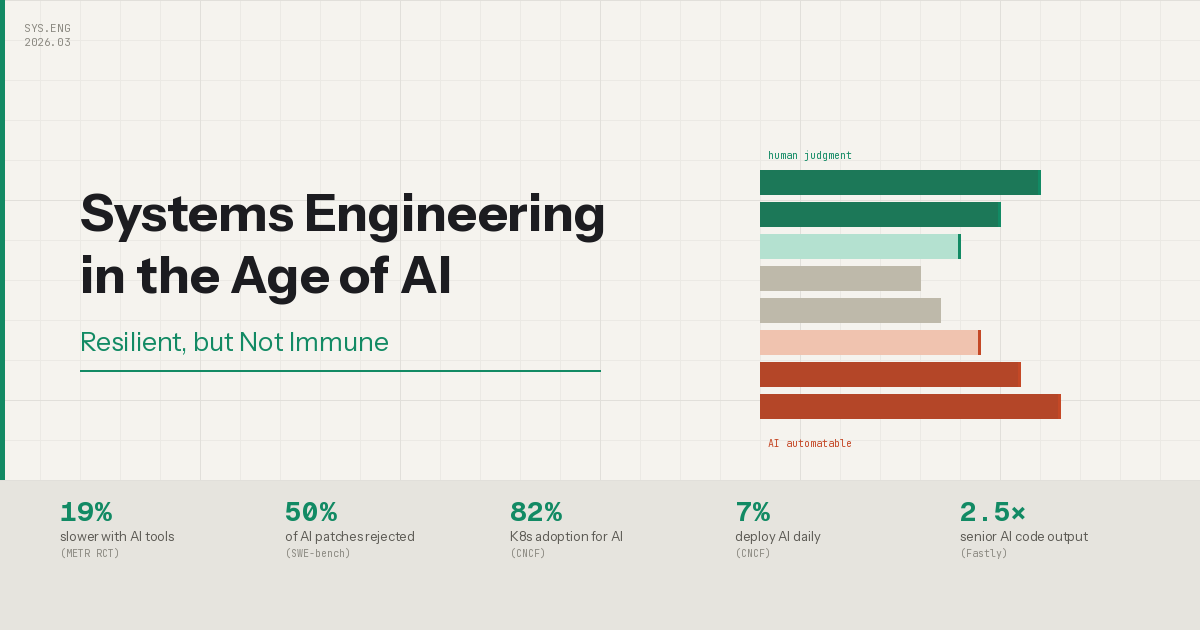

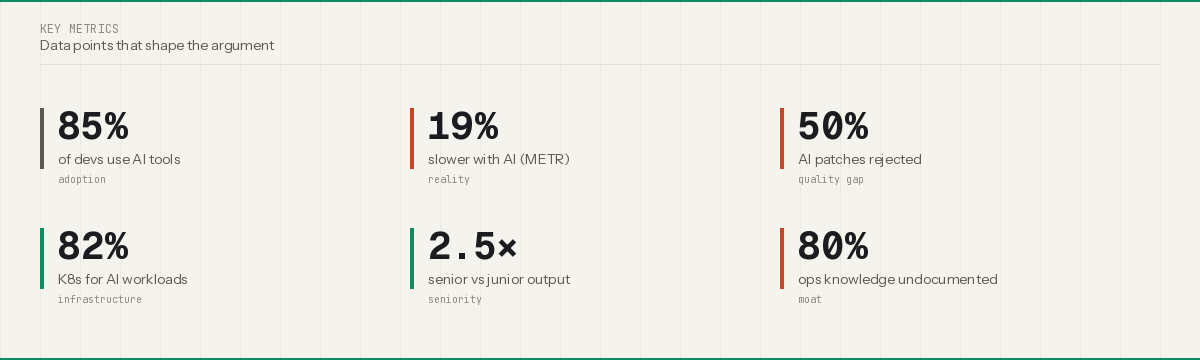

Cursor hit $2 billion ARR in ten months. GitHub Copilot has 20 million users. 85% of developers regularly use AI coding tools. AI writes between 27% and 46% of all new code, depending on how you measure it. If you stopped reading here, you’d assume the profession is finished. However, the evidence suggests otherwise.

The METR randomized controlled trial found that experienced developers were actually 19% slower when using AI tools on real tasks in large, familiar codebases. Developers believed they were 20% faster, a perception-reality gap of 39% points. METR’s own February 2026 follow-up acknowledged limitations and suggested improvements since the study period, but the core finding remains: “we’re bad at self-assessing AI’s helpfulness”.

“Compiles and passes tests are not the same as prodution-ready. “

Similarly, Google’s DORA 2025 report found a correlation between AI adoption and higher throughput but lower stability. Teams ship faster and break more things. And METR’s SWE-bench merge study revealed that roughly 50% of patches that pass automated tests would be rejected by actual maintainers. Compiling and passing tests is not the same as “production-ready”.

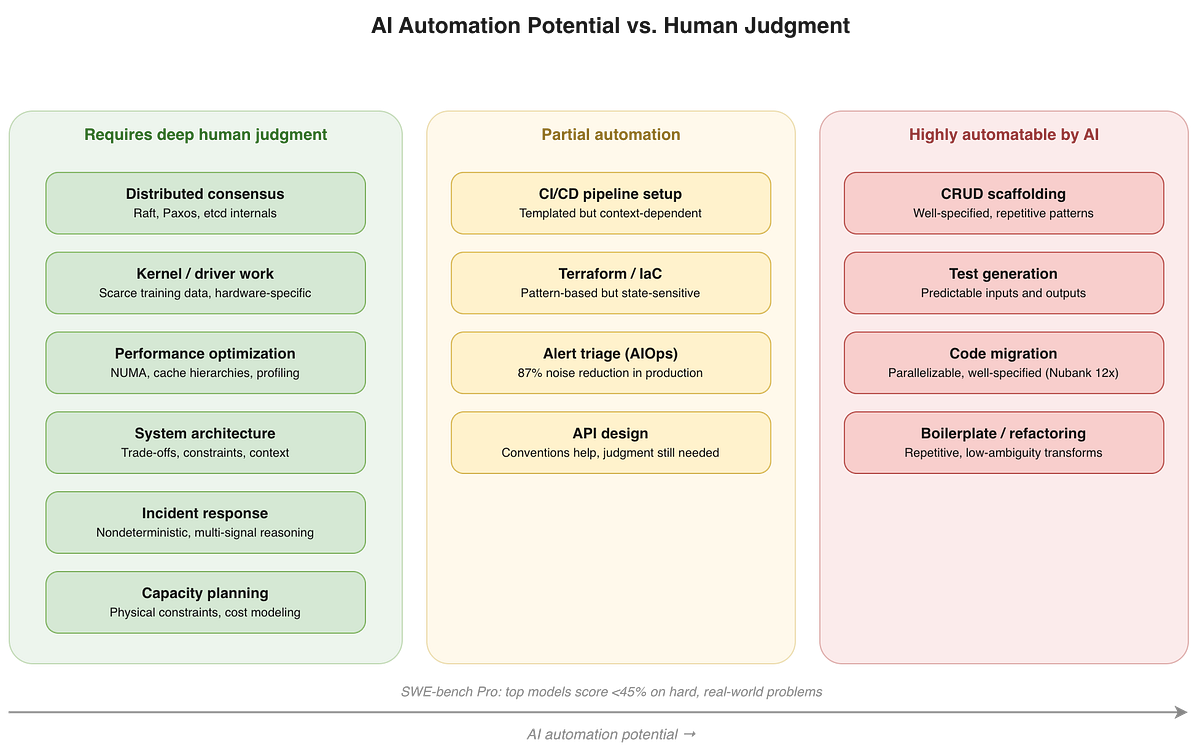

So, what does AI handle well? Many things that used to take hundreds of hours, like migrations, CRUD scaffolding, test generation, boilerplate, and repetitive refactoring. Devin’s flagship case study at Nubank, migrating a 6-million-line ETL monolith with a 12x efficiency improvement, is impressive because the task is repetitive, parallelizable, and well-specified. When you move to harder problems, performance drops sharply. The SWE-bench Pro benchmark, which tests against commercial codebases, drops top model scores to under 45% and below 20% on private repos.

The dividing line isn’t about which language or framework you use. It’s about task complexity.

Why distributed systems are structurally different

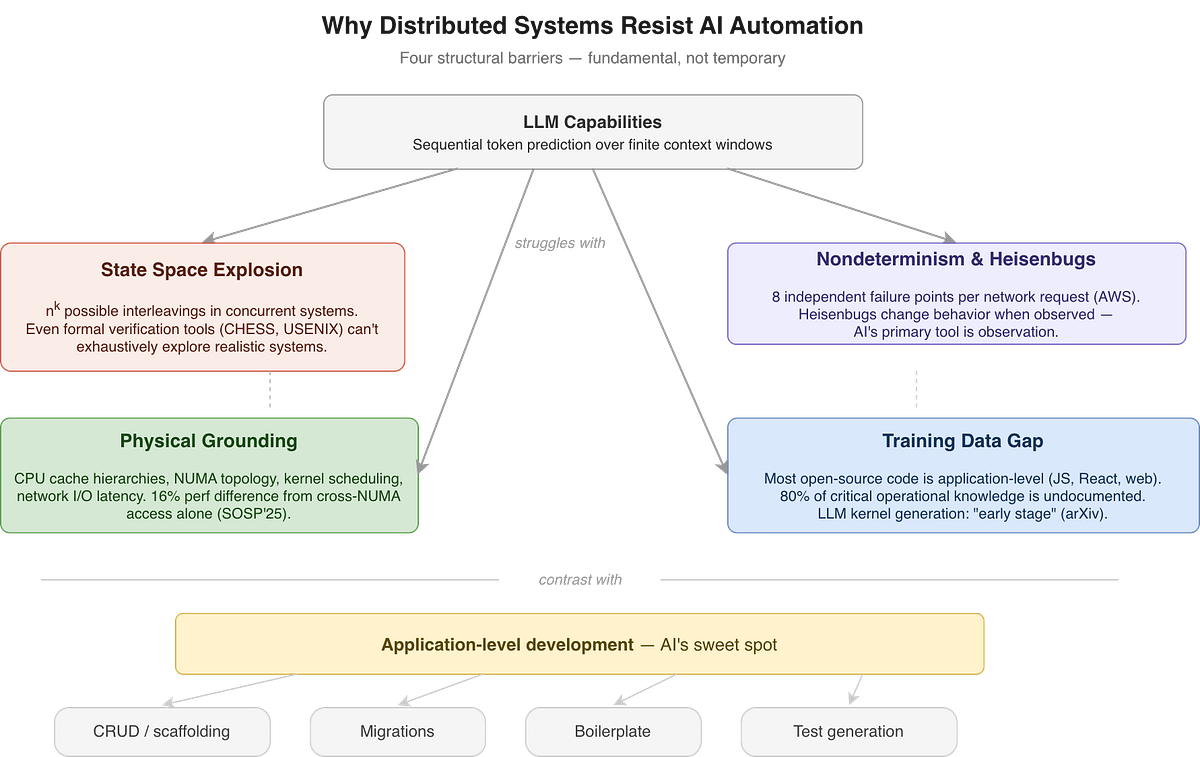

Did you try asking an LLM to scaffold a REST API? It performs impressively. Try now to ask it to debug a split-brain in a three-node etcd cluster during a rolling upgrade, and it falls apart. The resilience of systems engineering rests on fundamental properties of distributed systems that are genuinely hard for sequence-prediction models to capture.

State Space Explosion

In a distributed system with n concurrent processes executing k steps, the number of possible interleavings grows as n^k1. LLMs have no mechanism for systematically exploring combinatorial state spaces; alternatively, they predict tokens sequentially within finite context windows. Even purpose-built formal verification tools struggle with realistic systems where thousands of synchronization operations create intractable search spaces. If dedicated verification can’t exhaustively explore these spaces, an LLM certainly can’t.

Nondeterminism & Heisenbugs

AWS’s Builders’ Library identifies independent failures and nondeterminism as the two defining challenges: a single network request involves eight distinct steps, each of which can fail independently. Then there are Heisenbugs2. AI’s primary tool is observation. That’s precisely what makes these bugs disappear.

Physical Grounding

Performance engineering requires intuition about CPU cache hierarchies, NUMA topology3, kernel scheduling, and network I/O latency. A Tsinghua/SOSP’25 paper found a 16% performance difference from cross-NUMA memory access patterns alone. These aren’t codifiable as rules; they require contextual judgment about hardware-software interactions that comes from years of working close to the metal.

The Training Data Gap

Most open-source code is application-level. Kernel code, distributed consensus implementations, and storage internals are underrepresented. An arXiv survey on LLM-driven kernel generation concluded the field remains at an early stage. And roughly 80% of critical operational knowledge is undocumented, “institutional knowledge” that training data simply does not contain.

Bryan Cantrill, CTO of Oxide Computer and creator of DTrace4, captures this well:

Distributed systems control planes are “fractally complicated” — complexity exists at every level of zoom. Adding a server, updating across an air gap, managing distributed consensus during upgrades — these are wicked problems requiring not just technical excellence but hard-won wisdom.

These structural barriers protect certain classes of system work. But infrastructure spans a wide spectrum of task complexity, and the routine end of that spectrum is already being automated.

AI IS Automating Infrastructure Work Too…

AI is perfect for incidents it has been trained on. If your job is responding to known failure infrastructure, your position is already being automated. AIOps platforms accelerate detection by 73% and resolve 62% of routine incidents without any human involvement.

But AI still fails to handle novel failure modes, cascading cross-service failures, and incidents that require correlation across infrastructure, application, and business logic layers.

An AWS developer advocate made a great point: AI works brilliantly where problems are pattern-based and standardized, building pipelines, deployments, test automation, security scanning, and observability. A routine Terraform deployment is more automatable than architecting a novel distributed payment system.

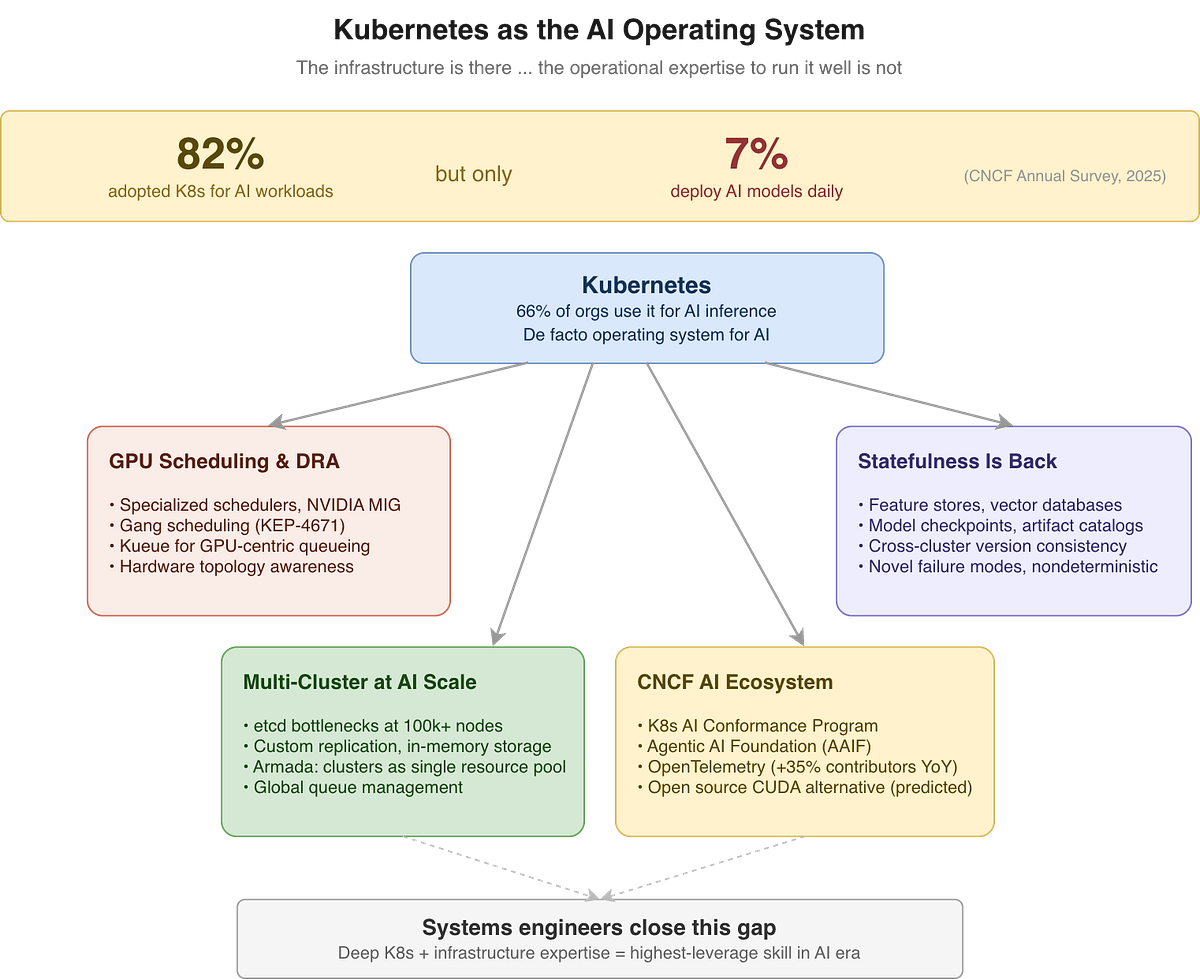

Kubernetes was not built for AI!

Yes, you read it right. It was designed for stateless web services; request in, response out, nothing persists. Now it is asked to orchestrate GPU-bound training jobs and coordinate inference across hundreds of clusters. This mismatch between what K8s was designed for and what it is being asked to do is creating a new category of systems engineering.

The scale is already enormous. 66% of organizations run AI inference workloads on Kubernetes, but only 7% deploy AI models daily. The infrastructure is deployed, but the operational expertise to run it well is not. More broadly, cloud-native adoption has reached 15.6 million developers. 90% of enterprises consider open source critical to their AI strategies, and 82% are building custom AI solutions rather than buying off-the-shelf.

This gap is where systems engineers become irreplaceable; they are essential to address fundamentally different operational demands. AI workloads on Kubernetes aren’t just “another container deployment”.

GPU scheduling and DRA (resource allocation)

Let’s go back one step and try to understand why Kubernetes needs to know about the AI workload. AI workloads need GPU-aware scheduling, partitioning expensive accelerators via NVIDIA MIG, coordinating distributed training through gang scheduling5 where all pods start together or none, and managing multi-tenant demand through systems like Kueue and Dynamic Resource Allocation (DRA). Getting this wrong means either GPU starvation or a crazy cloud bill, and the debugging requires understanding hardware topology, not just YAML.

Statefulness Is Back

AI pipelines rely on persistent systems, feature stores, vector databases, model checkpoints, and artifact catalogs. All of these must remain portable, recoverable, and version-consistent across clusters and regions. Kubernetes was originally designed for stateless workloads. Running stateful AI infrastructure on it well demands deep systems knowledge that LLMs can’t pattern-match from existing answers.

Multi-Cluster at AI Scale

This is where Kubernetes is being pushed to its limits. Standard etcd becomes a bottleneck at ultra-scale, prompting cloud providers to innovate with custom replication systems and in-memory storage for 100,000 node clusters. Solutions like Armada treat multiple clusters as a single resource pool with global queue management. This is distributed systems engineering at its most complex.

CNCF AI Ecosystem

The ecosystem is formalizing around these challenges. The CNCF6 launched an AI Conformance Program to standardize AI workloads across clusters. The Linux Foundation formed the Agentic AI Foundation with contributions from Anthropic7 (MCP), Block (goose), and OpenAI (AGENTS.md).

Why open source itself resists AI automation

AI-generated pull requests are flooding CNCF projects. The Kyverno maintainers did not introduce an AI Usage Policy out of the blue. They did it because AI contributions were overwhelming their review capacity faster than they could address them. More AI code does not help when the bottleneck is a human reviewer.

Projects like Kubernetes, Ray, and OpenTelemetry are exactly the kind of work AI struggles to automate: massive codebases, complex governance, global contributors, and the expectation of high accuracy, since a bug can cascade across every cloud provider’s production.

The open-source infrastructure stack compounds expertise over time and rewards deep contextual judgment. It is growing, and it is the kind of work AI won’t be able to replicate.

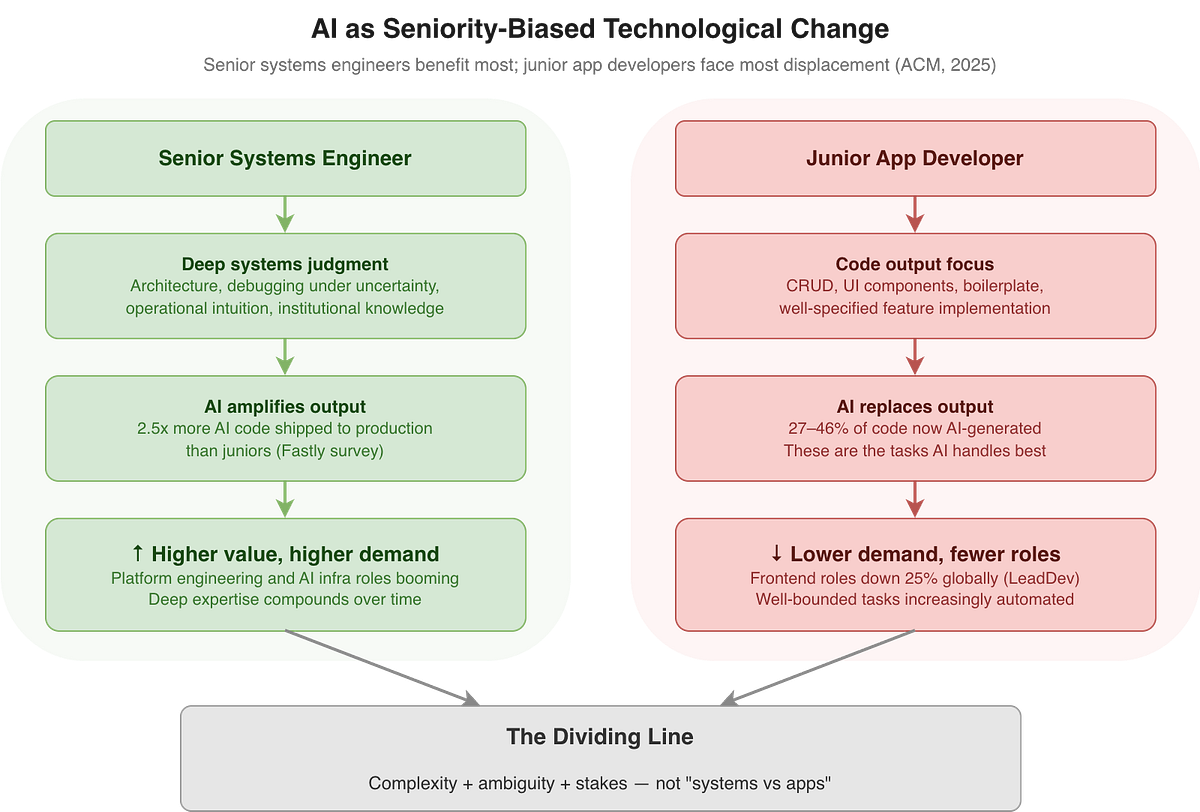

What to do about it

Instead of asking whether AI will replace engineers, the more useful question would be which engineers AI makes more valuable. An ACM Communications paper calls this “seniority-biased technological change.” AI amplifies engineers’ judgment with deep systems experience. A Fastly survey confirmed it: senior developers ship 2.5 times more AI-generated code to production than juniors because they know when AI output is wrong.

The question is not whether AI will replace engineers, but which engineers it makes more valuable and which it makes redundant.

The bottleneck has moved

AI is shifting software teams from a code-production constraint to a code-review constraint. The limiting factor is no longer writing speed but verification speed. The METR merge study found that half of the AI-generated patches that pass tests would be rejected by human maintainers.

“AI is moving the bottleneck from code production to code review“

Engineering team composition shifts from value engineers for writing new code to more who are reviewing, architecting, and deciding what not to ship. Evaluating whether the code handles edge cases and respects system invariants.

The junior paradox

This creates a painful squeeze for early-career engineers. The traditional path of writing simple features, receiving reviews, and gradually building judgment is being compressed from both ends. AI writes the simple features, while seniors are too busy reviewing output. There is less time to mentor juniors than before.

But review skill requires deep coding fundamentals that come from writing and debugging real systems.

This is why fresh graduates need stronger fundamentals than previous generations, because their first meaningful contribution is often evaluating whether AI-generated code is safe to ship.

Where to place your bets

Kelsey Hightower8 frames AI as surface-level technology: programs still run on hardware, operating systems, and network protocols.

New categories are emerging fast. AI infrastructure engineering is the fastest-growing discipline.MLOps and LLMOps are emerging as distinct fields.

If you are a full-stack application developer shipping CRUD features, you need to be worried. The escape route is not learning a new framework but moving down the stack. Learn how the scheduler places your pods, why your p99 spikes during GC, and what happens to your writes during a network partition.

If you are already in infrastructure, the fear is real, but not urgent(yet). Deploying a K8s cluster is no longer the problem. You need to go deeper and understand cross-region state consistency, FinOps for inference workloads, and debugging novel failure modes at scale.

I bet you have heard me say that 100 times before, but it’s essential now. Contribute to open source projects in the CNCF AI ecosystem. Engineers who shape these standards will define how AI infrastructure works for the next decade.

If you are early in your career, this is the hardest moment to enter software engineering and potentially the best. The junior pipeline is contracting, but demand for distributed systems and infrastructure expertise has never been higher. The bar is rising. The returns for clearing it are rising even faster.

https://link.springer.com/article/10.1007/s00446-025-00480-0

https://en.wikipedia.org/wiki/Heisenbug

https://en.wikipedia.org/wiki/Non-uniform_memory_access

https://en.wikipedia.org/wiki/DTrace

https://en.wikipedia.org/wiki/Gang_scheduling

https://en.wikipedia.org/wiki/Cloud_Native_Computing_Foundation

https://en.wikipedia.org/wiki/Anthropic

https://en.wikipedia.org/wiki/Kelsey_Hightower